I work as a freelance video editor, so I usually depend on high-end software to improve and upscale videos for client projects. These programs can produce great results, but they also demand strong technical skills, powerful computers, and a lot of time to learn. Over the years, I’ve built an efficient workflow with these video upscaling tools. However, I understand that this kind of setup is not realistic for most people.

Lately, many of our readers have been contacting us to ask how they can get similar results without buying costly software or learning complicated editing techniques for one edit. It became obvious that people wanted an easier option that could improve old or low-quality videos with minimal effort. That’s what pushed me to explore the best AI video upscalers, which are designed to automate the process and make video enhancement accessible to everyone.

To put together this list, I worked with several teammates from the FixThePhoto team. Together, we tested a variety of AI tools in different situations (including old family recordings and modern HD clips that needed extra refinement). We found that the strongest AI video upscalers do much more than increase resolution: they can recover missing details, reduce noise, fix motion problems, and improve sharpness and color, while also saving many hours of manual work.

Even though AI video upscalers are powerful tools, they are not perfect solutions. If used incorrectly, they can produce results that look unnatural. During our testing over the past few months, I noticed several mistakes that users often make when enhancing videos with AI. The good news is that these issues are easy to avoid once you understand how the technology works.

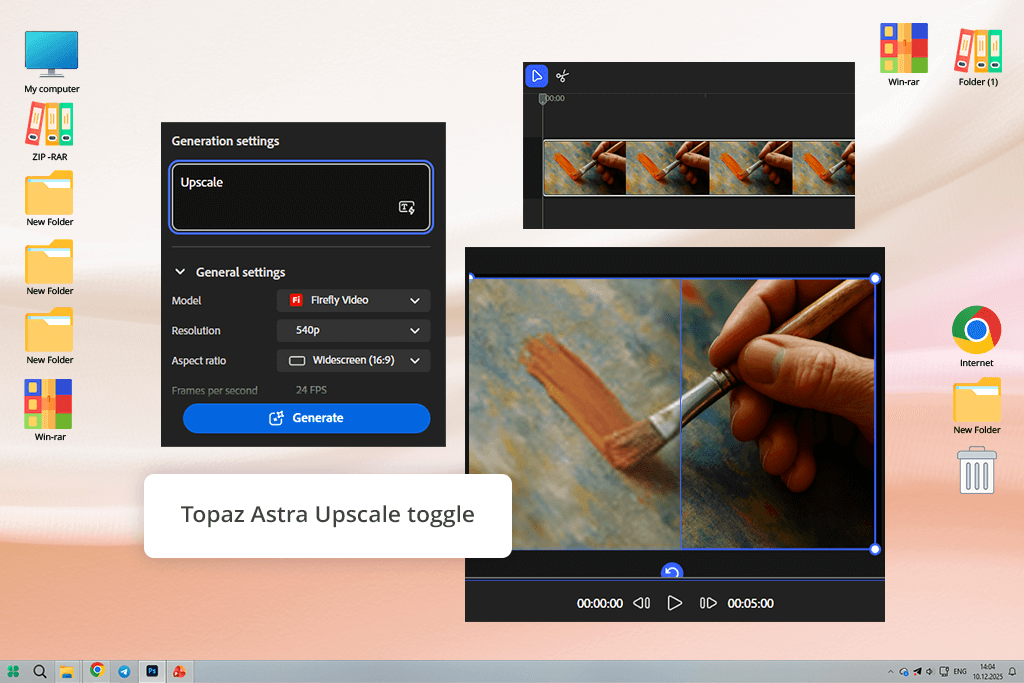

I’ve trusted Adobe products for a long time, so when I found out that Adobe Firefly added AI video upscaling using Topaz Astra, I wanted to try it myself. Mixing the smooth creative workflow with Topaz’s well-known AI upscaling technology seemed like a logical step for Adobe. To test it, I used two old promo videos: one recorded in 720p and another in a noisy 480p quality that looked outdated on modern screens.

I opened Firefly Boards, uploaded both videos, and followed the new process. After selecting a clip, I clicked the small Topaz Astra icon in the editing toolbar – it’s easy to overlook if you don’t already know it exists. For the first video, I chose Precise mode and set the output to 4K. For the second clip, I picked Creative mode to see how much style the AI would add. While the Firefly video model worked on the videos in the background, I was free to adjust colors on other files without any interruption.

The final results were impressive. The 720p clip looked sharp and clear, ready to be uploaded to YouTube without extra work. The 480p video didn’t suddenly look like true 4K, but it improved enough to be used in a client’s brand video, which was a big step up from the original. I also liked how Astra naturally handled faces and movement, without creating the fake, smooth look that some AI video upscalers produce. The main downside for me was that higher-resolution videos took longer to process than I expected.

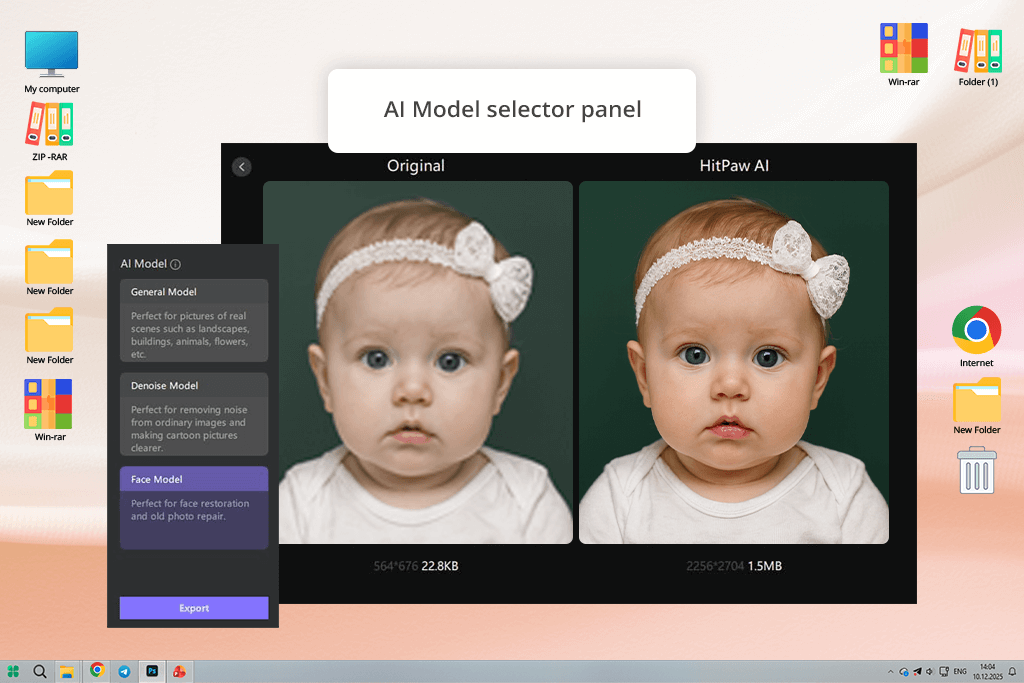

I probably wouldn’t have tested HitPaw if my coworker Nataly hadn’t mentioned it during a casual conversation. She described it as a simple tool that anyone can use, even without editing experience, so I decided to try it as an example of an easy-to-use option. I downloaded the desktop version because the AI video upscaler online version limits file uploads, and I tested it on two different videos: a talking-head interview and a short, animated explainer.

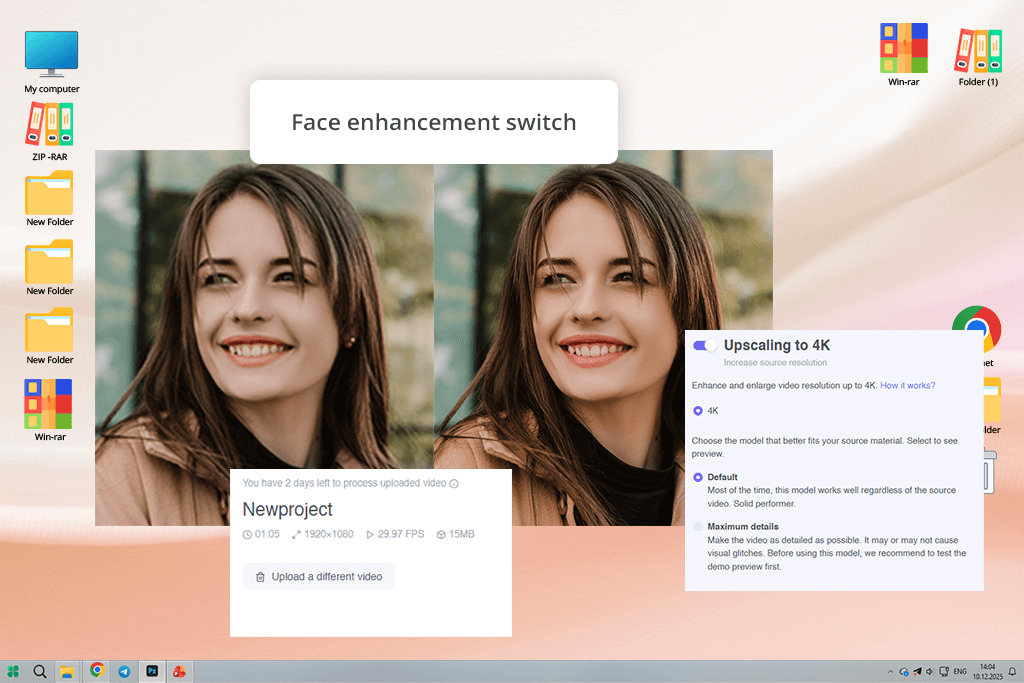

The first thing I noticed was how simple the layout is: you upload your video, choose an AI model (General, Face, Animation, or UHD), and then click “Enhance.” I used the Face model on the interview clip. It quickly improved brightness and made facial details clearer without any manual changes. However, when I reviewed the video closely, I noticed small visual artifacts around the edges during fast head movements. When I switched to the General model, the video looked smoother and more consistent.

Next, I tested the Animation model on the explainer video, and it performed well. The colors looked brighter, lines were sharper, and the 4K upscaling looked professional, especially for a tool that requires no manual adjustments. That said, the processing speed felt slow, and the free trial was very limited, making it hard to fully test the software. After buying the subscription, though, performance improved noticeably, with faster processing, better-quality exports, and smoother AI results overall.

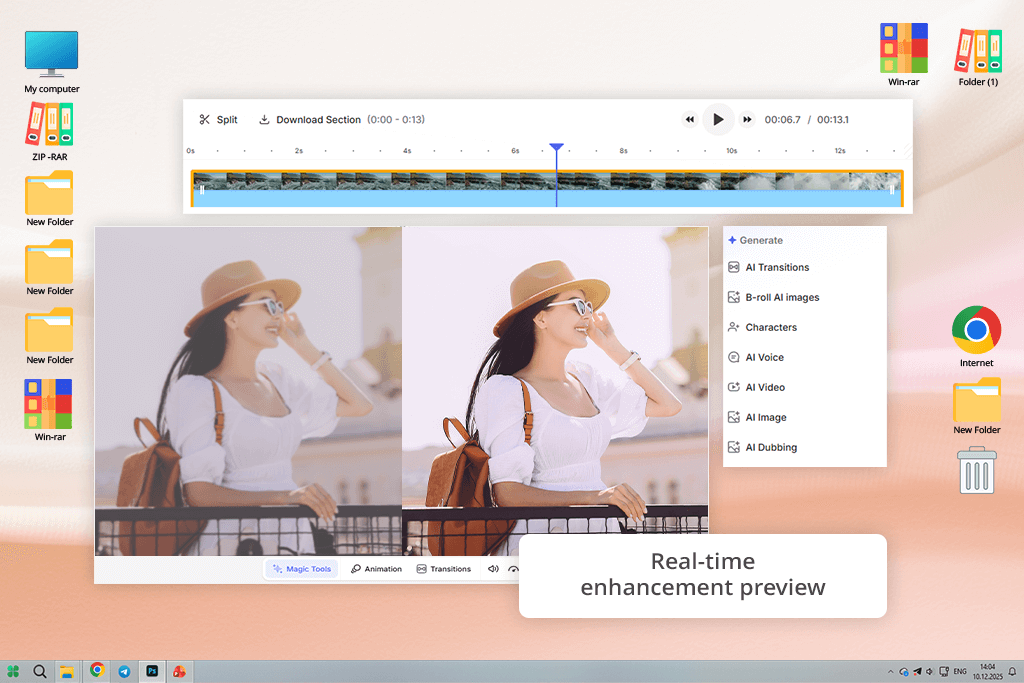

VEED is one of my go-to tools when I need to edit or upscale short videos for social media. Since I often create content for Instagram and YouTube Shorts, I enjoy the fact that everything works directly in the browser. There’s no need to install anything, no need for a powerful computer, and no technical stress. I can sit in a café with my laptop, upload a video, and start editing right away.

When I tried VEED’s AI Enhancer, I liked how smoothly it fit into the overall editing process. I uploaded several behind-the-scenes videos from a photoshoot and used AI upscaling together with noise reduction and color correction. One of the best features is the live preview: you can see the video become clearer and brighter as you adjust the settings. I also appreciate that subtitles, trimming, and basic edits are all available in the same workspace.

That said, VEED does have some limits. It works well for marketers and content creators who need speed, but the free version restricts export quality, and everything depends on having a good internet connection. You can’t work offline at all. Even so, VEED is strong when it comes to teamwork and fast edits. I often send preview versions to my teammates using the shared workspace, and it’s one of the easiest collaboration systems I’ve used in an online editor.

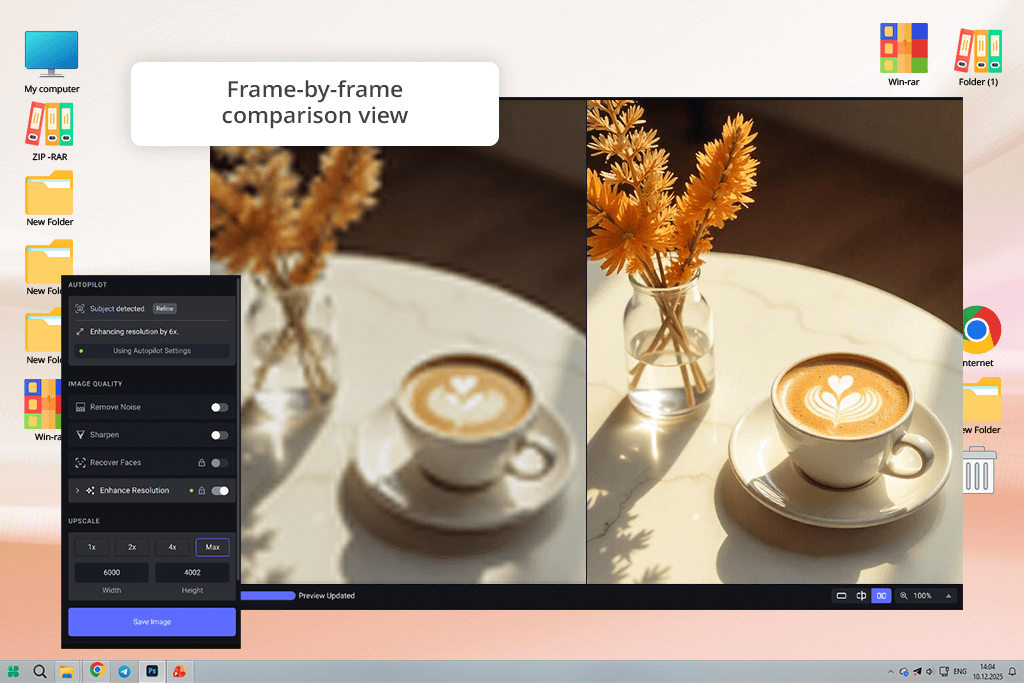

Because Adobe Firefly uses Topaz technology, I wanted to see how Topaz performs on its own. Firefly offers a clean and guided experience, but I was curious about using Topaz Video AI without any limits. I installed it on my main computer and tested it on different footage, including shaky handheld clips, dark indoor scenes, and old interlaced videos from a DSLR camera.

What stood out first was the level of control Topaz provides. Instead of a single “enhance” button, you choose specific AI models like Artemis, Proteus, or Iris, adjust sliders, and compare results frame by frame. For one low-light clip, I tested two models, one after the other, to see how they handled noise and movement. The side-by-side preview made it easy to spot differences: one kept more detail, while the other produced smoother motion.

However, this Topaz software demands strong hardware. Rendering videos uses a lot of system power, and my graphics card was pushed hard during large exports. Nonetheless, the results were worth the effort. The final videos looked cinematic, not artificial at all. Edges stayed clean, motion looked stable, and colors remained natural. This AI video upscaler isn’t ideal for quick social media edits, but for creators who care about detailed, frame-by-frame quality, Topaz is hard to beat.

You might not expect Adobe Premiere Pro to appear here, since it’s known as a professional editing program rather than a simple AI tool. But Adobe has been adding more AI features over time, and the upscaling option in Premiere Pro is a strong example. I’ve used Premiere for years for color grading and multi-camera edits, and I recently decided to properly test its Detail-Preserving Upscale effect.

I imported an old 720p product demo video. In the Effects panel, I searched for “Detail-Preserving Upscale,” applied it, and increased the scale to 200% to reach close to 4K quality. What impressed me most was the amount of control. Instead of letting AI handle everything, I could adjust noise reduction and texture details myself. The noise reduction setting worked well, removing pixelation while keeping text and faces sharp.

Premiere Pro is not cheap, and it takes time to learn. But if you already have it through an Adobe subscription, there’s no need to look for another video upscaling tool. It’s reliable, flexible, and gives you full control over every frame, which is exactly what you’d expect from professional software with built-in AI features.

I probably wouldn’t have found Neural Love by myself. My coworker Tati noticed an ad for it and suggested we try it out. We decided to do a quick but useful test using short phone videos and social-media-style clips, since those are the types of files our readers ask about most often. The goal was to see if a fully browser-based AI video upscaling tool could produce acceptable results without special hardware.

Using Neural Love was very straightforward. I uploaded a vertical video directly through the website, picked the enhancement options, and let the cloud-based AI handle everything. There were no complicated settings or sliders to adjust. The tool automatically improved sharpness, lighting, and facial clarity. Once the processing finished, the video looked brighter and cleaner. Faces were easier to see, and dark areas appeared more balanced.

However, the same simplicity can also be a drawback. There was no way to control how strong the AI effect was, and when working with more complex footage, I wished for more advanced options. The free version also limits file size, making the tool suitable for short or medium-length videos rather than large projects. Still, for creators who want a fast solution that works entirely online, Neural Love does what it promises.

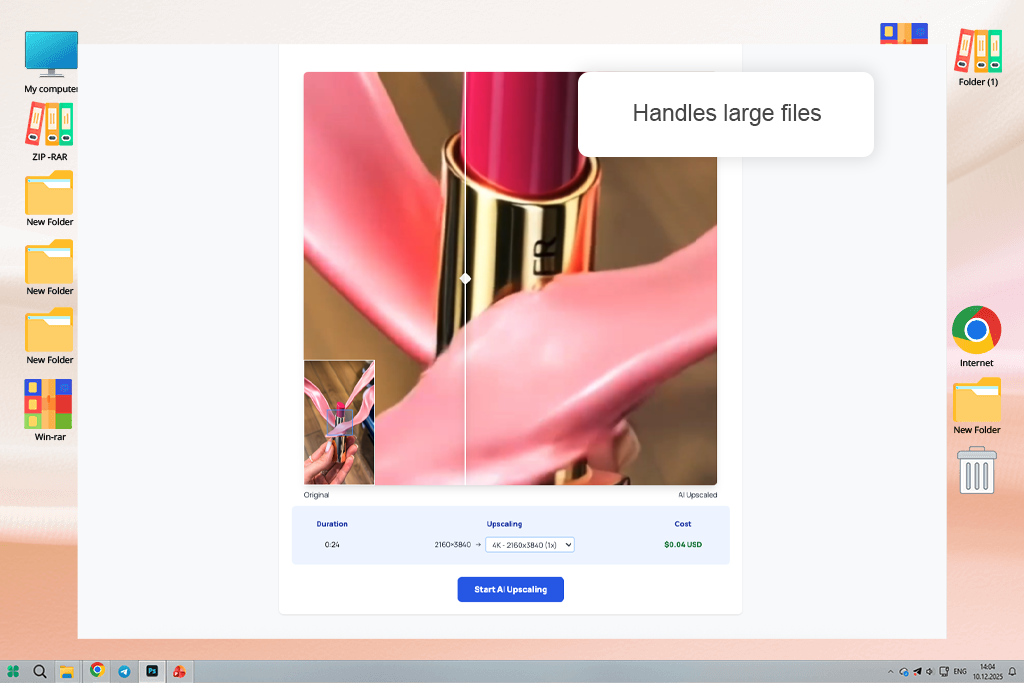

I tested Gigapixel with a specific purpose in mind. Sometimes we work with videos where smooth motion matters more than extreme sharpness, so instead of casual clips, I used a short cinematic video with camera motion and fine details – exactly the kind of footage where AI tools often struggle with flickering or unstable textures.

Gigapixel clearly treats video as a continuous sequence, not as separate images. I went through the timeline frame by frame, and the details stayed steady with no flashing textures or strange edge distortions. Even in scenes with a lot of movement, the AI video upscaling felt controlled and accurate. I was also impressed by how well it handled compressed videos, reducing blocky artifacts while keeping important details intact.

However, Gigapixel feels like software made for post-production professionals, not for fast or casual use. Processing times increased when I worked with higher frame rates, and the interface assumes you already understand things like resolution, video formats, and export settings such as ProRes.

In order to swiftly enhance video quality without the need for additional software, I chose to test FreeUpscalerVideo while working with low-resolution clips. Because this AI video upscale application operates immediately within the browser, uploading and processing movies seemed quick and simple, requiring no setup.

The workflow's simplicity — upload, upscale, and download in a matter of minutes — was what caught my attention. I concentrated on how it enhances clarity and sharpness, particularly on clear film where the outcomes appear substantially better.

I was really impressed by how easy it felt to use on a daily basis. When you only need a short quality boost for social media or content previews, it actually speeds up the process because it doesn't overwhelm you with settings.

Katana Video stood out as a surprisingly efficient tool when I needed to upscale short product clips for social media without wasting time on heavy software. The ai video upscaler handled the procedure seamlessly: I just submitted my clip and received cleaner, crisper outputs with far less noise and artifacts in minutes.

Working with it was simple due to the totally cloud-based setup, especially when evaluating many clips back to back. The outcomes weren't unduly "AI-looking," as is sometimes the case with similar tools, and the pricing made it feasible to utilize for routine content development rather than one-time tasks.

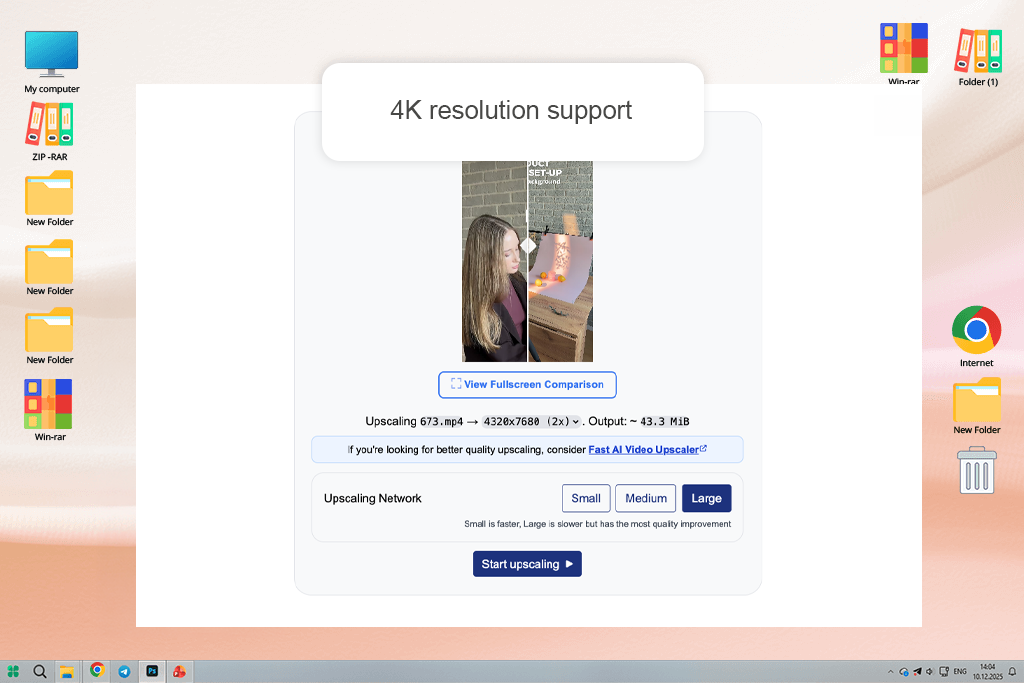

To keep our testing fair and practical, we focused on features that matter in real-world video upscaling, not marketing claims. I began by testing how well each tool handled resolution scaling, especially common upgrades like 720p to 1080p and 1080p to 4K. I closely examined whether the AI kept fine details like faces, text, and edges, or if it created fake-looking textures that seem sharp at first but fall apart on closer inspection.

Motion stability was another major focus. Nataly reviewed clips with camera movement, hand gestures, and fast scene changes, checking for frame-to-frame consistency. Flickering, unstable edges, and pulsing textures are common in AI upscaling, so she carefully moved through the footage frame by frame to catch problems that might not be obvious during normal playback.

Tati focused on how each tool handled noise and visual defects. She tested low-light videos, compressed clips, and older recordings to see if the AI could reduce noise without blurring details or making compression artifacts worse. In several circumstances, she compared different strength levels to find the point where the improvement stopped, and the video started to look overprocessed.

Color behavior was also important. I paid attention to changes in brightness, contrast, and saturation, and checked whether skin tones still looked natural after upscaling.

Lastly, we reviewed how efficient each AI video upscaler was, including processing speed, preview accuracy, export reliability, and how well the software fit into real editing workflows rather than isolated test situations. This method helped us base our conclusions on everyday performance instead of one-off tests.